New Virtual Reality Display Uses Optical Mapping To Ease Eye Fatigue

By Jof Enriquez,

Follow me on Twitter @jofenriq

A new type of 3D display being developed by researchers at the University of Illinois at Urbana-Champaign could pave the way for augmented reality (AR) and virtual reality (VR) headsets that do not cause headaches, nausea and eye fatigue symptoms commonly reported by users.

Developers of advanced VR and AR systems, such as the Oculus Rift or Samsung Gear VR, so far have not fully overcome the root cause of these symptoms – which scientists call the vergence-accommodation conflict.

In the real world, human eyes rely on both vergence (to prevent seeing double) and accommodation (to focus and prevent blurriness) techniques to visualize objects in the environment. Current stereoscopic headsets, however, uncouple these two interlinked processes as they present two 2D images from each eye to create a 3D image. Over time, the eyes get tired from the unnatural effort to fuse the images.

Now, engineers Liang Gao and Wei Cui, from the University of Illinois at Urbana-Champaign, have unveiled a new type of 3D display that can trick the eye to visualize virtual and augmented worlds, but without the adverse effects that come with the immersive experience.

“We want to replace currently used AR and VR optical display modules with our 3D display to get rid of eye fatigue problems,” said Gao in a press release. “Our method could lead to a new generation of 3D displays that can be integrated into any type of AR glasses or VR headset.”

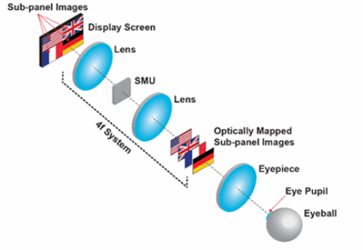

This technique, called optical mapping, involves dividing an organic light emitting diode (OLED) display into four subpanels that each create a 2D picture. A spatial multiplexing unit (SMU) consisting of spatial light modulators shifts these four 2D images to different depths, at the same time aligning the centers of the images with the viewing axis. When looking at the eyepiece, the user visualizes each of the images at varying depths. An algorithm then "blends" the images into a single 3D image.

The researchers said the device provides the focal cues necessary to perceive depth perception as our eyes do. They are continuing to develop the system in anticipation of partnerships with companies developing VR and AR headsets.

“In the future, we want to replace the spatial light modulators with another optical component such as a volume holography grating,” said Gao. “In addition to being smaller, these gratings don’t actively consume power, which would make our device even more compact and increase its suitability for VR headsets or AR glasses.”

Related, Stanford researchers are developing personalized VR displays which take into account individual users' eyesight to tweak and improve the visual experience. Their adaptive focus display technology also has the potential to alleviate the eyestrain than can cause discomfort or headaches among VR users.